TCP/IP Model: The Trasport Layer: Introduction

To properly learn something, we have to start at the beginning. We will be learning one concept at a time, process it, and move to the next.

The goal is consistent learning and absorbing information while feeling engaged and not overwhelmed.

I have divided the transport layer articles into three parts.

- Introduction to Transport layer

- UDP in Transport layer

- TCP in Transport layer

Goal of this article

I will be giving the introduction of the transport layer of the TCP/IP Five-layer network model.

The Transport Layer

-

The fourth layer of the TCP/IP Five-layer model is the transport layer.

-

The transport layer contains information in the form of segments.

-

Transport layer protocols are implemented in the end systems but not in network routers.

-

On the sending side, the transport layer converts the application-layer messages it receives from a sending application process into segments.

-

On the receiving side, the network layer extracts the transport-layer segment from the datagram and passes the segment up to the transport layer.

Functions of Transport Layer

-

The transport layer has the critical role of providing communication services directly to the application processes running on different hosts.

-

A transport layer protocol provides for logical communication between application processes running on different hosts.

-

The transport layer ensures that underlying layers must receive data in the same sequence in which the transport layer sent it.

-

This layer provides end-to-end delivery of data between hosts, which may or may not belong to the same subnet.

-

All server processes intend to communicate over the network are equipped with well-known Transport Service Access Points (TSAPs), also known as port numbers.

Services of Transport Layer

Multiplexing and Demultiplexing

-

We extend the host-to-host delivery service provided by the network layer to process delivery service for applications running on the hosts.

-

At the destination host, the transport layer receives segments from the network layer. The transport layer is responsible for delivering the data in these segments to the appropriate application process running in the host.

-

A process (as part of a network application) can have one or more sockets, doors through which data passes from the network to the process, and through which data passes from the process to the network. Thus, the receiving host's transport layer does not deliver data directly to a process but instead to an intermediary socket. Because there can be more than one socket in the receiving host at any given time, each socket has a unique identifier. The format of the identifier depends on whether the socket is a UDP or a TCP socket.

-

Let's consider how a receiving host directs an incoming transport-layer segment to the appropriate socket.

-

Each transport-layer segment has a set of fields in the segment for this purpose. At the receiving end, the transport layer examines these fields to identify the receiving socket and then directs the segment to that socket. This job of delivering the data in a transport-layer segment to the correct socket is called demultiplexing.

-

The job of gathering data chunks at the source host from different sockets, encapsulating each data chunk with header information (used later in demultiplexing) to create segments, and passing the segments to the network layer is called multiplexing.

-

Reliable Data Transfer

-

The problem of implementing reliable data transfer occurs not only at the transport layer but also at the link layer and the application layer. The general problem is thus of central importance to networking.

-

TCP exploits many of the principles of reliable data transfer.

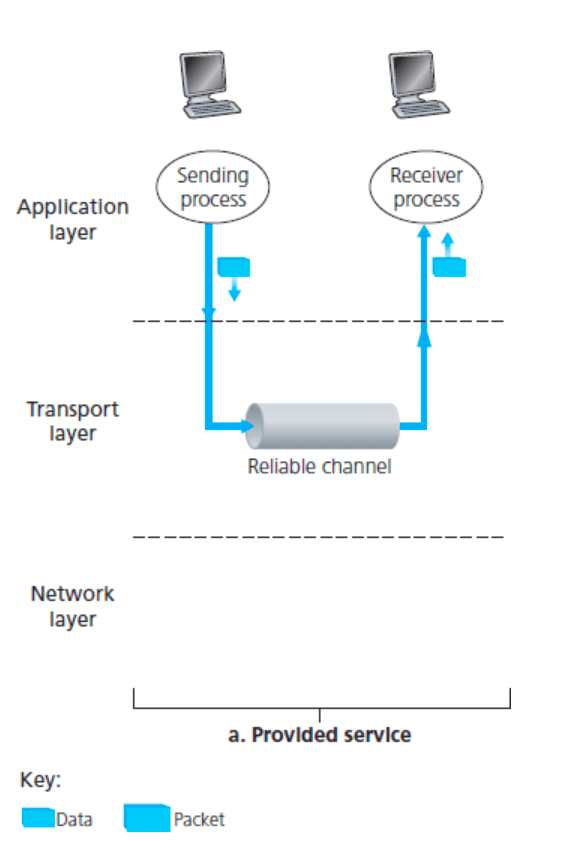

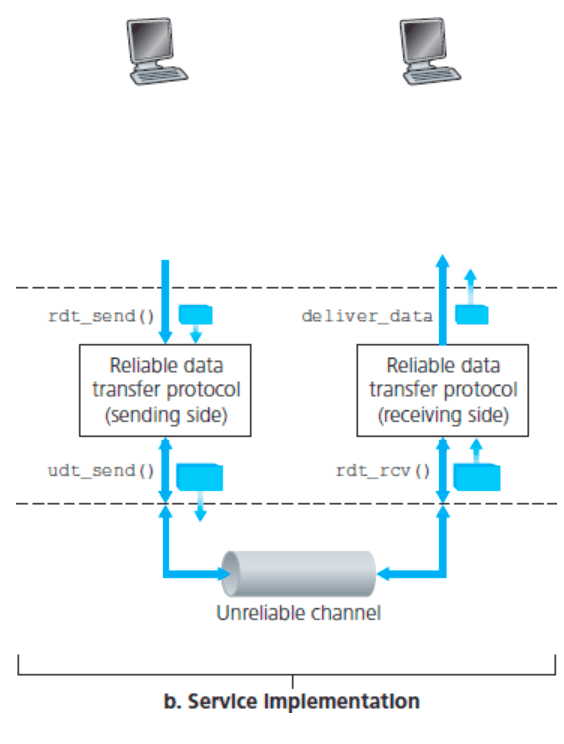

- The service abstraction provided to the upper-layer entities is a reliable channel through which the transport layer can transfer data. With a reliable channel, no transferred data bits are corrupted (flipped from 0 to 1, or vice versa) or lost, and all are delivered in the order in which the transport layer protocol sent them. It is precisely the service model offered by TCP to the Internet applications that invoke it.

- It is the responsibility of a reliable data transfer protocol to implement this service abstraction. This task is made difficult because the layer below the reliable data transfer protocol may be unreliable. TCP is a reliable data transfer protocol implemented on top of an unreliable (IP) end-to-end network layer. More generally, the layer beneath the two reliably communicating endpoints might consist of a single physical link (as in the case of a link-level data transfer protocol) or a global internetwork (as in a transport-level protocol).

Congestion Control

-

We consider the problem of congestion control in a general context, seeking to understand why congestion is a bad thing, how network congestion is manifested in the performance received by upper-layer applications, and various approaches that layers can take to avoid, or react to, network congestion.

-

Causes and the Costs of Congestion

There are three increasingly complex scenarios in which congestion occurs.

-

Two Senders, a Router with Infinite Buffers

-

Two Senders and a Router with Finite Buffers

-

Four Senders, Routers with Finite Buffers, and Multihop Paths

-

The three cases mentioned above provide insights into the causes of network congestion.

- Arrival rate more significant than the service rate, which causes the newly arrived packets being dropped.

- Lack of control and agreement among the flows competing for network resources.

- Some or all applications might experience unbounded delay, which results in unfair resource allocation among multiple streams.

-

-

Solution for Congestion Control

Proper congestion control requires application-dependent specification and system information. The transport layer is the suitable layer to implement congestion control since it resides between the application and network layers.

-

Open Loop Control

- During connection setup, the transmission rate of a connection is determined. Once the connection is set up, packets are sent, and the system processes without feedback information from either the network or the destination, and the control scheme is referred to as open-loop control.

- It provides source host no feedback from either the network or the destination host regarding packet loss or network congestion

- Call Admission Control

- Policing (Leaky bucket)

-

Closed-Loop Control / Feedback Control Connections are informed dynamically about the network's congestion state and are asked to adapt their rates accordingly. Source host, network, and destination host form a closed-loop since the network or destination host always provides feedback to the source host to adjust its transmission rate dynamically to fit network condition.

- TCP congestion control

-

Did you find this post helpful?

I would be grateful if you let me know by commenting below. Means a lot to me!

Sign up to get updates when I write something new. No spam ever.

Comments